I used to rely on BypassGPT for more flexible AI responses, but it’s no longer available and my workflow is suffering. Are there any safe, free substitutes or tools that offer similar uncensored or less restricted AI behavior without breaking rules or risking bans? I’d really appreciate recommendations, plus any setup tips or security/privacy caveats I should know about.

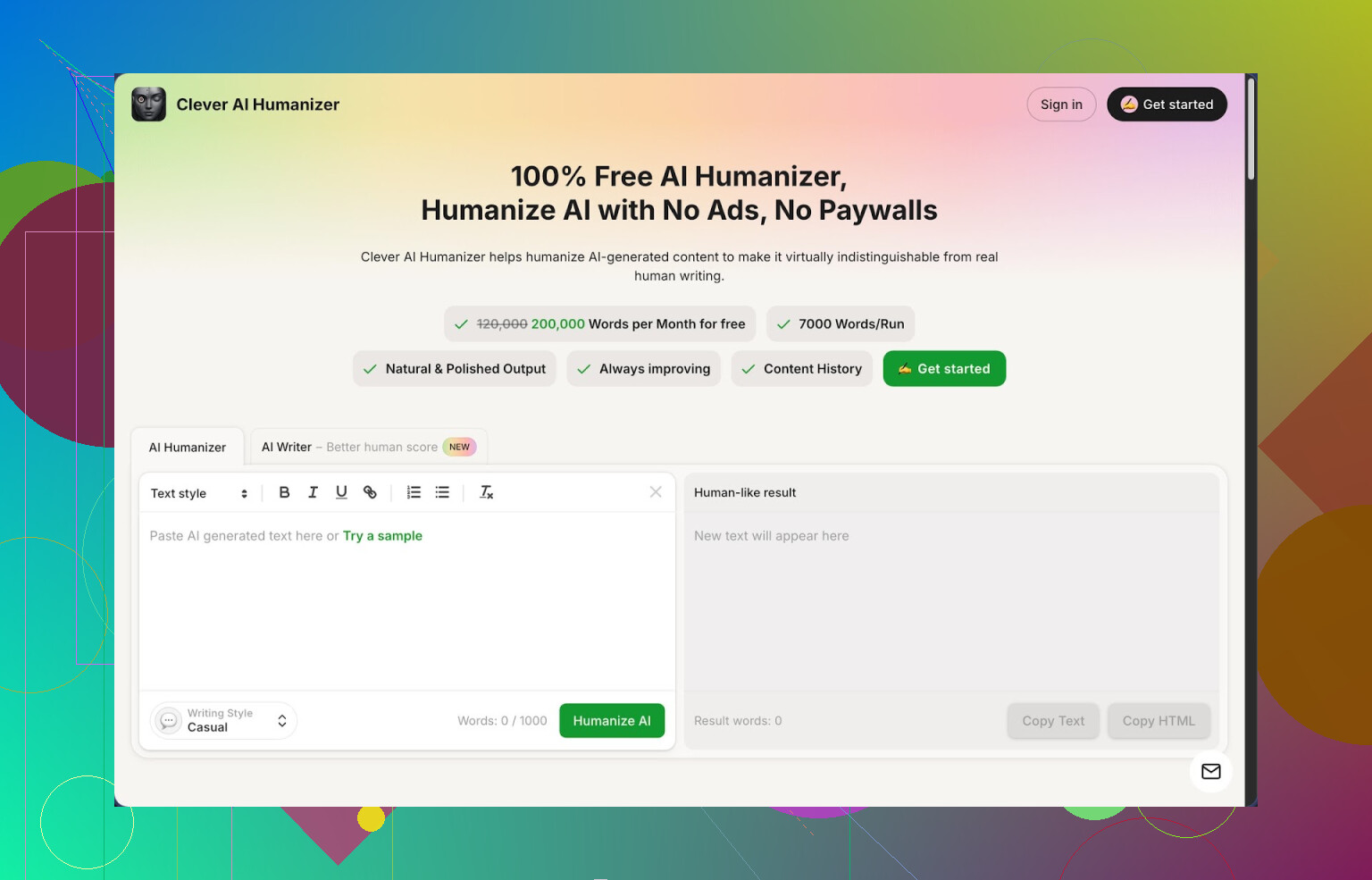

1. Clever AI Humanizer – my take after messing with it way too long

Clever AI Humanizer is the one I keep coming back to when I need AI text to stop sounding like AI. Not because it is perfect, but because the limits are generous and it does not nag me for money every 3 minutes.

Quick numbers from my usage:

- About 200k words per month on the free plan

- Up to 7k words per run

- Three styles: Casual, Simple Academic, Simple Formal

- Built in AI writer, grammar checker, paraphraser

I first found it while trying to get some long-form text past ZeroGPT. I pasted a few thousand words of pure model output, ran it through the Casual mode, and ZeroGPT showed 0% AI on three different samples. That got my attention, because most tools either break the meaning or still get flagged as “likely AI”.

The big issue if you write with AI tools is familiar: everything sounds flattened, repetitive, and detectors mark it as 100% machine. I tested a bunch of “humanizers” over a weekend, and this one ended up being the only one I kept open in a tab.

Here is how the main module works from my point of view.

You paste your AI text, pick a style (I mostly stick to Casual), hit the button, and wait a couple of seconds. It spits back a rephrased version that tries to break standard AI patterns, lengthen or shorten parts, and shift wording so detectors see it as more natural. The larger limit helps a lot when you work with long articles or reports, since splitting text into tiny chunks often ruins flow.

What surprised me is that it generally keeps the original meaning intact. I tried it on technical guides, opinion pieces, and a dry academic-like section. In most runs, key claims and data stayed the same, while the structure and rhythm changed enough to sound more like something a person typed over an afternoon.

Now the extra modules, because those are what make it useful day to day.

The Free AI Writer lets you generate a first draft straight inside the site. You give it a prompt for an article or essay, it writes a base version, then you run that through the humanizer in one workflow. For detector scores, this combo gave me the best results. The raw AI draft often scored “highly AI” on ZeroGPT, but after humanization it dropped to 0% for several tests I did.

The Free Grammar Checker is basic but handy. It fixed stuff like double spaces, stray commas, and awkward phrasing. Not as aggressive as tools like Grammarly, but enough to make text ready for a blog or email without much extra work.

The Free AI Paraphraser is closer to a standard rewriter. I used it for:

- Rewording paragraphs from older articles

- Creating alternative versions for SEO experiments

- Adjusting tone from stiff to more plain or the other way around

All of this is in one interface, so the workflow looks like this for me:

- Draft with AI Writer or paste text from another model

- Run through Humanizer with Casual style

- Quick pass with Grammar Checker

- Optional Paraphraser pass for specific sections

This setup saved me a lot of time when I needed several variations of an article for testing, or when I had to “de-AI” something fast for a client who hates the usual LLM tone.

Couple of downsides from my use:

- Not all detectors will show 0% AI every time. ZeroGPT liked the output in my tests, but other detectors sometimes still flagged parts as mixed or partially AI.

- Text often gets longer after humanization. It adds or expands phrases to break patterns, so if you need strict word counts, you will need to trim manually.

- Occasional weird phrasing slips through, especially on technical or niche topics. I usually do a quick read-through for those.

Even with those issues, for a free tool with 200k words a month, it has been the most practical option I have used. No paywall jumps in the middle of a batch, and you can experiment a lot before hitting a limit.

If you want more detail and screenshots, there is a longer breakdown here:

https://cleverhumanizer.ai/community/t/clever-ai-humanizer-review-with-ai-detection-proof/42

Video review here if you prefer watching someone click through it:

Clever AI Humanizer Youtube Review https://www.youtube.com/watch?v=G0ivTfXt_-Y

There is also some discussion about AI humanizers and tests from other people on Reddit:

Best Ai Humanizers on Reddit https://www.reddit.com/r/DataRecoveryHelp/comments/1oqwdib/best_ai_humanizer/

All about humanizing AI https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Short version. There is no safe, free tool that gives you true “uncensored” BypassGPT‑level behavior without tradeoffs, but you can get close on flexibility with a mix of models and a humanizer.

Here is what has worked best for me:

- Use more permissive models instead of “bypass” wrappers

These are not magic, but they push fewer safety filters than most chatbots.

• OpenRouter

Lets you hit a bunch of models through one site or key. Some models there respond more freely than typical frontends. You control temperature and system prompts, which already gives you “less restricted” behavior for brainstorming, fiction, edgy content, etc.

Drawback. Public models log requests, so do not send anything sensitive.

• Local models with Ollama or LM Studio

Run models on your own PC. No remote filters.

Good free choices to start:

- Llama 3 8B Instruct

- Qwen 2.5 7B or 14B

- Mistral 7B Instruct

They are weaker than big cloud models for some tasks, but you get full control. For some workflows, I do a first pass with a local model, then refine with a hosted one.

- Combine stricter models with a humanizer

Here I partly disagree with @mikeappsreviewer. I would not rely on detector scores as some kind of “proof”. Detectors often misfire on both human and AI text. I treat them as a rough signal, not a goal.

That said, Clever Ai Humanizer is useful for a different reason. It breaks the typical LLM rhythm. If you push a careful or filtered answer from a cautious model through it, the output tends to feel less like policy-speak and more like normal writing. For workflows that need “un‑AI” tone or lower chance of being flagged by simple filters, it helps.

I use it on:

• Long explainer posts that sound stiff.

• Drafts where the model keeps repeating “as an AI language model”.

• Text from stricter frontends that still holds good info but reads robotic.

- Prompt tactics that reduce roadblocks

Some prompts trigger filters fast. You can avoid a lot of friction by changing how you ask.

Examples that helped me:

• Ask for “pros and cons”, “risk analysis”, “policy review”, or “fictional scenario” instead of direct how‑to language on sensitive topics.

• Use “help me evaluate” instead of “tell me how to do X”.

• Break tasks into steps. First ask for theory, then ask for comparison, then ask for rewrite in your tone. Many models tolerate that path better than blunt requests.

• For research topics that hit safety walls, phrase things as “explain what experts warn about” or “summarize safety guidelines”.

- Frontends with lighter guardrails

These are still safe, but usually less naggy than mainstream sites.

• Perplexity free tier

Good for research. It is stricter on some topics, but its “focus” modes and citations help you pull raw info then you rewrite or humanize. I often pair Perplexity for facts with Clever Ai Humanizer for tone.

• Poe free models

Some of the community bots respond more freely, especially older or smaller models. You hit a daily limit, yet for quick jobs it works.

- What I would avoid

• Any tool promising “full jailbreak”, “no filters at all”, or “100 percent detector bypass” with no transparency. Often they are just thin skins around the same models, plus shady logging.

• Blind trust in AI detector scores. Use them as a quick check only. Focus on human readability and accuracy first.

Simple workflow that replaced BypassGPT for me:

- Draft with a more permissive model. Either a local model through LM Studio or a model on OpenRouter.

- If the tone is too stiff, run it through Clever Ai Humanizer once, usually Casual.

- Do a quick manual edit for facts, edge cases, and any awkward phrases.

- If a platform is strict, ask a “safer” model to rephrase in a more neutral or academic tone, then optionally humanize again.

This does not fully replicate old BypassGPT behavior, but you get:

• More control over censorship via local or third‑party models.

• Less robotic output through a tool like Clever Ai Humanizer.

• Better safety for yourself by not feeding everything into some sketchy jailbreak site.

Short answer: you’re not going to get a 1:1 “free, uncensored BypassGPT clone” that’s actually safe. But you can rebuild most of that functionality with a different stack than what @mikeappsreviewer and @waldgeist described, without repeating their whole playbook.

Here’s what’s been working for me:

- Skip the “magic jailbreak” sites

Most of the BypassGPT-style pages were just thin skins over the same mainstream APIs, with sketchy logging and some prompt trickery. The ones that still exist are usually:

- Logging everything

- Overhyping “uncensored”

- Going offline randomly

If you care about safety and consistency, those are a hard pass.

- Use two-model workflows instead of “one uncensored bot”

Instead of hunting for a single wild-west model, I split the job:

-

Model A: “Serious brain”

Use a decent model (could be a free tier of a mainstream provider or a smaller cloud model) to get accurate info, structure, and reasoning. You accept that it will sometimes say “I can’t do that.” -

Model B: “Tone + flexibility”

Then you feed that into a separate tool whose only job is style, not facts. This is where something like Clever Ai Humanizer actually shines.

I slightly disagree with how heavily @mikeappsreviewer leans on detector scores, but I do agree with the use case: it’s very good at stripping that robotic “AI disclaimer” voice, which feels like “less restricted” even when the content is basically the same.

This two-step setup gets you:

- Cleaner, more factual content from a cautious model

- Output that reads like an unfiltered human, via a humanizer layer

- Use Clever Ai Humanizer strategically, not as a magic invisibility cloak

Both @mikeappsreviewer and @waldgeist already covered it as a general tool, so I won’t rehash. Where I’ve found Clever Ai Humanizer most useful for a BypassGPT replacement is:

-

Taking a partially censored answer and making it usable

Example: model says, “I cannot provide a step-by-step guide, but here are general considerations…”

You run it through the humanizer and suddenly it feels like a normal essay instead of a policy statement, without you having to rewrite everything by hand. -

De-AI-ing long technical docs

If your workflow is blog posts, reports, docs for clients, etc., the real pain point often isn’t censorship but “this sounds like it was written by a toaster.” Clever Ai Humanizer fixes that part surprisingly well, especially in its Casual style.

I would not chase 0% AI detector scores as a goal. That’s where I diverge from both of them. Detectors are all over the place, and designing your text around them is a rabbit hole that kills productivity.

- Change the intent of your prompts instead of only dodging filters

You already got a bunch of tips from @waldgeist on phrasing. One angle I use that is slightly different:

- Think in terms of “frames”:

- Exploration frame: “walk through how experts think about…”

- Critique frame: “analyze the flaws, limitations, failure modes of…”

- Adversarial frame but in theory: “describe what could go wrong if someone misused X, and how systems are supposed to prevent it”

Models are more lenient when you present yourself as an analyst, not an operator. You still get rich detail, but without tripping the most extreme “how-to” alarms.

- Local models only if you’re ok babysitting them

Personally I’m less bullish on local models than @waldgeist for a “drop-in replacement” for BypassGPT:

- Yes, you get less censorship.

- No, they are not as good at nuanced, factual, multi-step reasoning as the big cloud models, unless you have serious hardware and tuning patience.

- They also hallucinate more, which defeats the point if you’re doing anything that actually matters.

I treat local models as “sandbox brainstorming tools,” not as the core of a professional workflow. Fine for fiction, edgy dialogue, wild what-ifs. Not great as your only source of truth.

- Reality check on “uncensored”

If by “uncensored” you mean:

-

Dark humor

-

NSFW-ish fiction

-

Spicy opinions

you can usually get close enough by: -

Asking for fictional scenarios

-

Explicitly stating “this is for writing a novel / screenplay”

-

Then toning down the output with something like Clever Ai Humanizer so it sounds like a real person and not a risk-averse PR intern.

If you mean “I want exact, operational step-by-step instructions for obviously harmful stuff,” no safe free tool is going to serve as a clean BypassGPT stand-in. You’ll just jump between half-broken websites until one disappears and you start this search again.

- A lean version of the stack that actually replaced BypassGPT for me

No fancy dev setup, no extra software:

- Use a reasonably capable but slightly conservative model for ideas, structure, and facts.

- Feed that output directly into Clever Ai Humanizer to strip the AI voice, remove spammy disclaimers, and get more natural language.

- Do a quick human pass to trim wordiness and fix any minor weird phrasing.

Result:

- 90% of the flexibility I used to get from “bypass” frontends

- Content that doesn’t scream “written by a chatbot”

- No sketchy “jailbreak” sites in the middle of my workflow

Is it as wild as old-school BypassGPT on a good day? No.

Is it stable, free-ish, and safe enough to actually rely on? Yes, and for long-term workflow that matters more.