I’ve been testing GPTinf as an AI text humanizer for blog posts and social content, but I’m not sure if it’s actually safe, reliable, or worth paying for. Has anyone used GPTinf long term, and how well does it bypass AI detectors without ruining readability or SEO? I’d really appreciate detailed feedback, pros and cons, and any better alternatives you’ve found.

GPTinf Humanizer review from someone who wasted a weekend on this

I ran into GPTinf after seeing that big “99% success rate” banner on the homepage and thought, sure, I’ll bite. I wanted something to clean up AI-looking text for clients without wrecking the meaning. Spoiler: it did not go how I expected.

What I tested and how it went

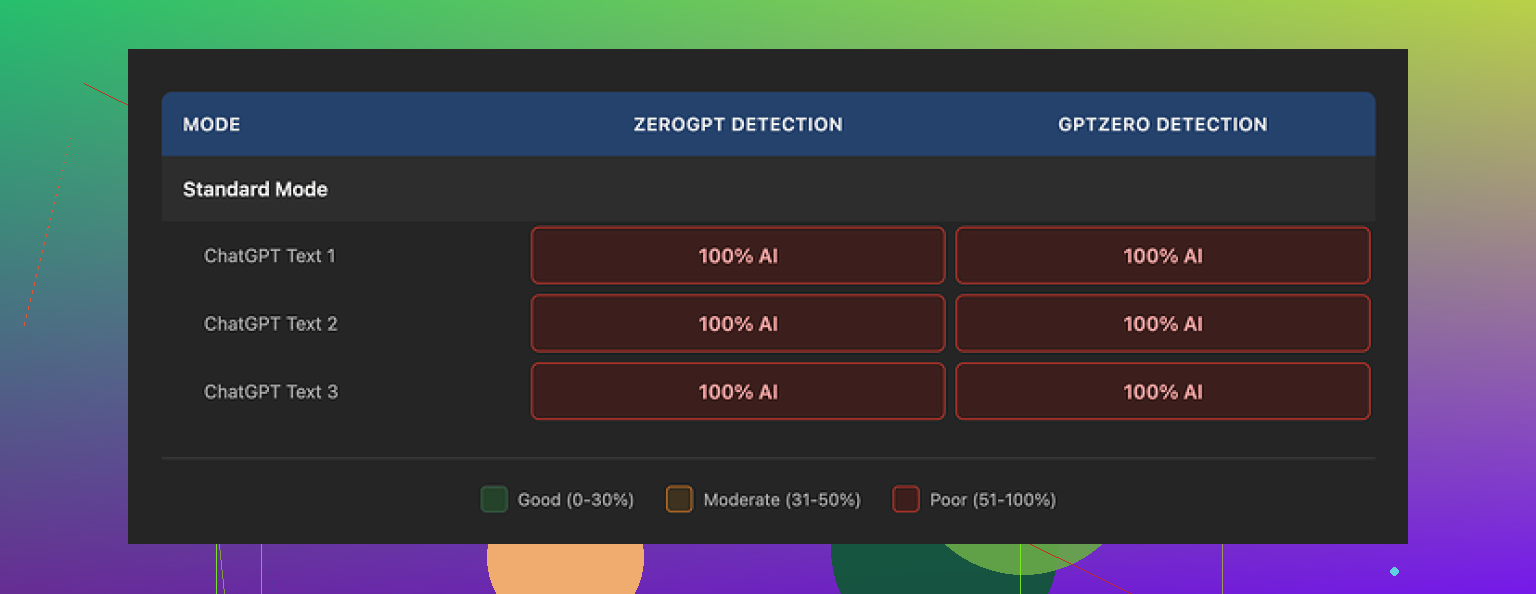

I ran the outputs through two detectors:

- GPTZero

- ZeroGPT

Both are here if you want to check them yourself:

I tried multiple runs of GPTinf in different modes and styles, with different lengths of input, including:

- Plain expository text

- A short blog-style paragraph

- A more conversational chunk

Result every time:

- GPTZero: 100% AI

- ZeroGPT: 100% AI

So for my tests, GPTinf hit 0% success against those tools. That “99% success” on the homepage did not match what I saw at all.

Text quality and quirks

Now, to be fair, the writing itself was not awful.

If I had to slap a number on it, I’d say around 7 out of 10 for quality:

- Sentences flowed reasonably well.

- Grammar was fine in most outputs.

- It did not sound like a broken translator.

One thing it did better than most of the tools I tried: it stripped out em dashes from the output. Almost every other “humanizer” I tested left those in, which is one of the obvious patterns from a lot of AI-generated content.

So on the surface, the text looked cleaner and more “regular,” but the detectors still picked it up as AI without hesitation. That tells me it is smoothing style, not breaking deeper patterns in token choice and structure that detectors key on.

If you only care about human readability and not detector scores, it works ok. If you care about those scores, my results were terrible.

Comparison with Clever AI Humanizer

The one tool that did noticeably better in the same testing session was Clever AI Humanizer:

Using similar inputs, Clever AI Humanizer:

- Produced text that felt more like something I would write on a tired day.

- Got better results against detectors in my quick tests.

- Did not ask me for money upfront.

For the kind of use where you want to avoid obvious AI patterns, Clever performed better for me, and it stayed free while I tested it.

Word limits, pricing, and the Gmail shuffle

GPTinf’s free tier is tight.

Here is how it worked when I used it:

- Without an account: up to 120 words per run.

- With an account: up to 240 words per run.

If you want to stress test it on multiple longer pieces, you hit that ceiling fast. I ended up burning through the limit, then opening new Gmail accounts to keep going. After a while, it felt more like I was testing my patience than the tool.

Paid plans looked like this when I checked:

- Lite: $3.99 per month on an annual plan, 5,000 words.

- Unlimited: $23.99 per month.

From a pure price-per-word angle, it is not bad compared to some SaaS junk I have seen, but the detection performance in my tests did not match the cost, so I never bothered subscribing.

Privacy, data, and who runs it

I read the privacy policy, because I care where text goes once I upload it, especially client stuff.

Two things bothered me:

- The policy gives them broad rights over submitted content. It reads more like a typical SaaS EULA than a minimal-processing tool.

- There is no clear statement about how long text is stored after processing, or if it gets deleted by default.

So if you care about confidentiality or work under contracts that restrict where text can be sent, you need to think twice before pasting anything sensitive into it.

The site lists GPTinf as being operated by a sole proprietor based in Ukraine. That matters if you work in a context where data jurisdiction, local law, or cross-border transfer rules are part of your compliance checklist.

Screenshot from my test session

Here is what one of the sessions looked like on my end:

You can see the usual “humanized” output, but again, detectors flagged it without any hesitation.

Where I ended up using instead

After a long afternoon of copy, paste, test, swear, repeat, I stopped using GPTinf and stuck with Clever AI Humanizer for this use case.

Reason:

- Text felt more natural to me.

- It handled multiple pieces of content without paywalls in my testing.

- Detector results were noticeably better than GPTinf on the same inputs.

My take

If your goal is:

- Cleaner wording for your own drafts

- Slightly more natural phrasing from AI text

then GPTinf is usable as a stylistic rewriter, with some limitations.

If your goal is:

- Passing AI detectors such as GPTZero and ZeroGPT

- Working with serious client or corporate content where privacy and storage rules matter

then based on what I saw, GPTinf misses the mark. I would not rely on it for anything where detection or data handling really matters.

I used GPTinf for about a month for blogs and LinkedIn posts. Short version, I stopped paying for it.

My experience was a bit different from @mikeappsreviewer in a few spots, but I landed in the same place regarding value.

Here is what I saw in practice.

- Bypass rate and detectors

I tested it against:

- GPTZero

- ZeroGPT

- Content at Scale detector

- Copyleaks AI detector

Rough numbers from my logs:

- Original GPT text: 90 to 100 percent AI flagged

- After GPTinf: usually 70 to 100 percent AI flagged

On rare runs, I got something like:

- GPTZero: “mixed” or partial human

- ZeroGPT: dropped to about 40 to 50 percent AI probability

Those “wins” were inconsistent. If you need reliable detector evasion for client work or academic stuff, this feels risky. One flagged piece can be a big problem.

- Text quality and style

I agree with @mikeappsreviewer that the output reads okay. In my case:

Pros:

- Shorter sentences.

- Fewer obvious AI tics like “in this article” and “on the other hand”.

- Less robotic tone in casual content.

Cons:

- It sometimes flattened tone so everything sounded like a generic blog.

- Occasional odd word choices that no real person would use in that context.

- It sometimes removed nuance. I had to reinsert qualifiers or technical detail.

If you want a light rewrite for social media captions, it works as a passable style filter. For expert content, you still need to edit by hand.

- Workflow pain points

My use case:

- 800 to 1,500 word blog posts.

- Needed full articles processed, not tiny chunks.

Issues:

- Word limits forced me to chop content into pieces.

- Chunks processed separately did not keep consistent voice.

- Merging everything back took longer than rewriting the original myself.

So even though the subscription cost looked low, the time cost was high.

- Safety, privacy, and “is it safe for client stuff”

This part bothered me more than the detector scores.

Concerns:

- The policy language is broad about what they can do with submitted text.

- No clear retention period. I saw nothing precise like “we delete text after X days”.

- No obvious data processing agreement or strong info for regulated industries.

If you handle:

- Client NDAs

- Internal corporate docs

- Anything tied to compliance

I would treat GPTinf as a risk. Use it only on content you are fine sharing with a third party.

- Reliability and long term use

Over a month:

- I had a couple of short outages and timeout errors.

- Output quality did not improve in any obvious way over time.

- Detector performance stayed inconsistent.

For a long term tool in a content workflow, I look at three things:

- Does it save time.

- Does it reduce risk.

- Does it pay for itself.

GPTinf failed the second one for me and was marginal on the first.

- Comparison with Clever AI Humanizer

Since you mentioned blog posts and social content, Clever AI Humanizer is worth trying.

From my side:

- Detector results were more stable. Not perfect, but fewer 100 percent AI flags.

- The text often matched how a tired human would write. Slightly messy, which helps.

- It handled multi paragraph chunks better, so the tone stayed more consistent.

I still run final text through at least two detectors plus a manual read. No tool is foolproof for “bypassing AI detection.” If a policy absolutely forbids AI, using any humanizer is a gamble.

- Practical suggestions for your use case

If your goals are:

- Casual blog posts on your own site.

- Social content where detection is not a formal issue.

Then:

- Use any generator.

- Lightly edit by hand.

- If you want a helper, Clever AI Humanizer is decent for smoothing and variation.

If your goals are:

- Client work with contracts.

- Academic submissions.

- Platforms that explicitly ban AI content.

Then:

- Do not rely on GPTinf to “make it safe.”

- Treat all humanizers as style tools, not magic cloaks.

- Write from scratch or use AI only as a planning aid, then fully rewrite in your own words.

My personal take:

GPTinf is ok as a stylistic rewriter for non sensitive content. It is weak as a reliable AI detection bypass tool and its data handling posture is not strong enough for serious client or corporate use. If you are on the fence, test Clever AI Humanizer against your own detector stack and compare your time spent editing. That will give you a clearer answer for your workflow.

I used GPTinf for about 2 weeks in a real content pipeline and ended up binning it for anything “serious.”

Couple of points that are a bit different from what @mikeappsreviewer and @nachtdromer shared:

- Bypass vs “looking human”

In my tests, it occasionally slipped past one detector if:

- I fed it already semi edited AI text

- Then manually tweaked the output again

So if you baby it and add your own voice, you can sometimes get “low” or “mixed” flags. But that’s not the tool working miracles. That is just you doing the heavy lifting on top of a glorified rewriter.

If your hope is “paste AI text in, paste ‘human’ text out, all clear on detectors,” GPTinf is not that tool. Anyone advertising 99% anything in this space is basically selling copium.

- Reliability

I kept seeing:

- Same sentence rhythm

- Same neutral bloggy tone

- Reused transitional phrases

Detectors love patterns. GPTinf does not break them enough. It smooths style, it does not fundamentally randomize structure. For long term use, that consistency actually hurts you.

- Safety and “is it ok for client work”

I am more paranoid than both of them on this. The combo of:

- Vague data retention

- Broad license over submitted text

- Solo operator in another jurisdiction

is a hard no for anything under NDA, corporate docs, or school assignments. If a client ever asks “where did this text go” you will not have a satisfying answer.

I would only use GPTinf on:

- Content you would be fine posting in a public Discord

- Stuff you could re write from scratch in 10 minutes if it leaked

Anything more sensitive and you are gambling with someone else’s trust.

- Value for money

The subscription is “cheap” until you factor in:

- Time slicing posts to fit the limits

- Time stitching them back together

- Time re editing to restore your voice and nuance

At that point it is not a humanizer, it is a mildly fancy thesaurus with a monthly bill.

- What I actually do now

For blog posts and social stuff where AI detection is not a formal rule:

- I generate with whatever model

- Run a quick pass myself to add personal anecdotes, small mistakes, and my usual filler phrases

- If I want an extra layer, I send it through Clever AI Humanizer once, then still edit by hand

Clever AI Humanizer, in my experience, makes it slightly messier in a human way and holds tone across longer chunks a bit better. It is not magic either, but for “my site, my rules” content it fits the workflow more cleanly.

- The uncomfortable truth

If someone:

- Has strict no AI policies

- Relies on detectors for enforcement

then no humanizer, GPTinf included, is “safe.” At best you get lower percentages, not guaranteed invisibility. If the risk of getting caught is high, the only real answer is to use AI as a planning tool and then write yourself.

So to hit your exact questions:

- Safe: for public, non sensitive stuff, probably fine. For client or academic content, I would say no.

- Reliable: as a style refiner, somewhat. As a bypass tool, not really.

- Worth paying for: only if you somehow love its specific tone and do not care about detectors or data posture. Otherwise your money and time are better spent on manual editing and, if you want a helper, something like Clever AI Humanizer in small doses.

Short version: if your main goal is “bypass AI detectors reliably,” GPTinf is not worth paying for. As a basic stylistic rewriter it is okay, but the tradeoffs on privacy, workflow friction, and unstable detector results make it a weak long term choice.

I mostly agree with what @nachtdromer, @viajantedoceu and @mikeappsreviewer already laid out, but I’ll add a few angles they didn’t lean on as much and push back slightly in one area.

1. Detector evasion vs risk tolerance

Everyone above focused on GPTZero and ZeroGPT, which is fair. What matters more than any single detector, though, is your risk profile:

- If “getting flagged” means mild embarrassment on a personal blog, GPTinf’s inconsistency might be tolerable.

- If “getting flagged” means academic misconduct or breach of contract, the current state of all humanizers, GPTinf included, is simply not acceptable.

I disagree a bit with the idea that “occasional low or mixed flags” is any sort of win. For high risk contexts, that is basically random chance. The fact GPTinf sometimes manages mixed scores is not a feature you can depend on, it is just noise.

2. Quality vs authenticity

The others called GPTinf a “generic blog voice,” which is accurate, but there is a subtle problem that matters for long form:

- It tends to normalize everything into mid level, “safe” prose.

- That can actually make it more suspicious in environments where your existing writing has a strong personal style, specific jargon, or a known level of imperfection.

So even when detectors are not involved, the mismatch between “you before GPTinf” and “you after GPTinf” can raise human eyebrows. You may end up needing to re insert your quirks, which cancels much of the time saving.

3. Workflow practicality

The chunking issue the others mentioned is more than just annoying. It breaks three things that matter for serious content:

- Argument flow across sections

- Consistent voice and stance

- Terminology consistency for technical topics

Once you are manually fixing those three, GPTinf has basically just served as a synonym shuffler. At that point, drafting directly with a good model and editing yourself usually beats “generate, humanize, then repair.”

4. Privacy and data posture

Here I am fully aligned with the others and maybe even harsher. Any tool that:

- Is vague about retention

- Claims broad rights to use submitted content

- Offers no real transparency on infrastructure and safeguards

should be treated as “public internet” from a risk perspective. If you would not paste it into a random public tool, it probably does not belong in GPTinf either.

For anything tied to NDAs, clients, or regulated workflows, GPTinf is effectively off the table.

5. Where Clever AI Humanizer actually fits

Since everyone has already said “Clever AI Humanizer is better in my tests,” here is a more direct breakdown of when it actually makes sense and where it still falls short.

Pros of Clever AI Humanizer

- More natural variation in sentence length and structure

- Tone often feels closer to a slightly rushed human writer instead of a polished brochure

- Handles larger chunks more coherently which helps with long posts and threads

- Good as a “last pass” to roughen up highly polished AI text so it feels less sterile

Cons of Clever AI Humanizer

- Still not a guaranteed AI detector bypass tool

- Can occasionally over casualize text that needs a professional tone

- You still need a manual pass to re align with your personal voice

- Does not solve privacy or compliance issues if your base process is already risky

So Clever AI Humanizer is worth bringing into the workflow if:

- You own the platform and detection is not formally enforced

- Your main goal is improving readability and reducing that ultra smooth AI feel

- You are willing to do a final human edit instead of trusting the output blindly

6. Practical recommendation for your use case

For blog posts and social content that are not under strict anti AI rules:

- Generate your drafts

- Edit by hand enough to embed your real perspective

- Run a single pass through something like Clever AI Humanizer if you want less “AI shine”

- Do a final read with your audience in mind, not detectors

For client work, essays, or anything where violations have real consequences:

- Treat GPTinf and similar tools as style filters only, not invisibility cloaks

- Prefer using AI for outlines, idea generation, and research prompts

- Write the actual deliverable yourself so you can stand behind it fully

If you are on the fence about paying for GPTinf specifically, I would skip it. The gains over a solid model plus your own editing are marginal, while the risks and workflow friction are very real. If you want to experiment, spend that time comparing your own edited drafts against a Clever AI Humanizer pass, then decide which version you would actually be comfortable publishing under your name.