I recently went through a HIX bypass review and the outcome wasn’t what I expected, but the explanation I received was brief and confusing. I’m trying to understand what factors typically affect a HIX bypass review, what documentation might be missing or weak, and if there’s any way to appeal or request a reconsideration. Can anyone familiar with HIX bypass reviews walk me through how these are evaluated and what steps I should take next to improve my chances?

HIX Bypass AI Humanizer review, from someone who paid for it so you do not have to

I kept seeing HIX Bypass mentioned with the same talking point. “99.5% success rate” slapped next to Ivy League logos and a Shopify logo. The homepage looks confident, almost aggressive about it.

So I ran my own test. Twice. Different prompts, different lengths, different tones.

Here is what happened.

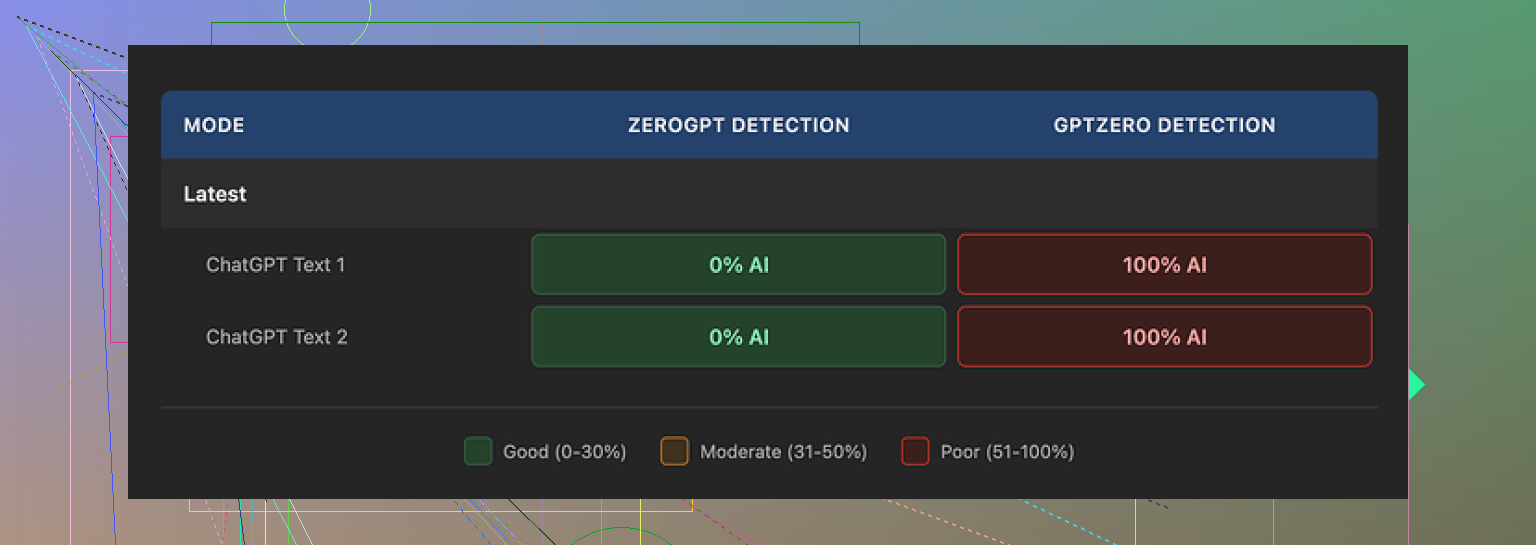

I pushed the outputs through two detectors that people actually use: ZeroGPT and GPTZero.

ZeroGPT said both samples were fine. Passed clean.

GPTZero said both samples were 100% AI.

Not “partially AI”.

Not “mixed”.

Full AI score.

HIX Bypass has its own embedded checker on the site. It claimed both of my processed texts were “Human-written” across almost all detection tools. That turned out wrong for GPTZero. Very wrong.

Screenshot from the run:

Writing quality

On a 1 to 10 scale, I would put the writing at about a 4.

Here is why.

- It kept the em dashes the original AI draft used. Detectors like GPTZero latch onto some of that structure. If the whole point is to humanize, I expected basic cleanup like that.

- One output had a broken sentence fragment in the middle, like it glitched during rewriting.

- Another output wrapped an entire sentence in square brackets for no reason. No context, no explanation. It looked like an editing note that never got removed.

The text felt processed, not written. If you hand this to an editor or teacher or client, I would not trust it to pass a quick human read. Detector scores aside, it looked off.

Free tier and refund traps

The limits are rough if you want to test it properly.

Free tier:

You only get 125 words per account.

That is not 125 words per day.

That is total.

You burn through that in a single paragraph, so you cannot stress test different styles or lengths without paying.

Paid with refund:

There is a “3-day refund” policy, but there is a catch. You must stay under 1,500 words of usage in those 3 days to be eligible.

So if you do what I did, which is run many variants to see how it handles detectors, you blow past that word count very fast. At that point, the refund option is gone.

If you are thinking about trying it, track your word count carefully from the first run. Do not assume you will test freely then refund if you do not like it. The policy is set up in a way where light to moderate testing can disqualify you.

Pricing and terms

On paper, the pricing looks cheap.

Their “Unlimited” annual plan works out to about 12 dollars per year. That sounds nice if you write a lot.

Then you read the terms.

Two things bothered me.

- They keep the right to change your usage limits after you pay. So “Unlimited” is not really locked in. If they adjust caps later, the contract gives them room to do that.

- They give themselves broad rights over any content you put through the tool. Not just for processing, but for wider use.

If you are pushing client work, sensitive drafts, or anything you care about, that should make you pause.

On top of that, free-tier users get flagged for model training. Their policy states inputs from free accounts might be used to train their AI systems.

If you write anything personal, proprietary, or under NDA, do not throw it in there on a free account.

Detector performance

Here is the short breakdown from my runs:

ZeroGPT

HIX Bypass outputs passed clean in my tests. Looked human by that score.

GPTZero

Both outputs hit 100% AI probability.

HIX’s own detector widget

Gave me “Human-written” labels across most detector integrations, including for outputs GPTZero later slammed.

The main issue is the site gives you a strong sense of safety with that “Human-written” label, while some widely used detectors disagree completely.

If you are doing homework, content for clients, or anything graded or reviewed by tools based on GPTZero, I would not stake your work on this.

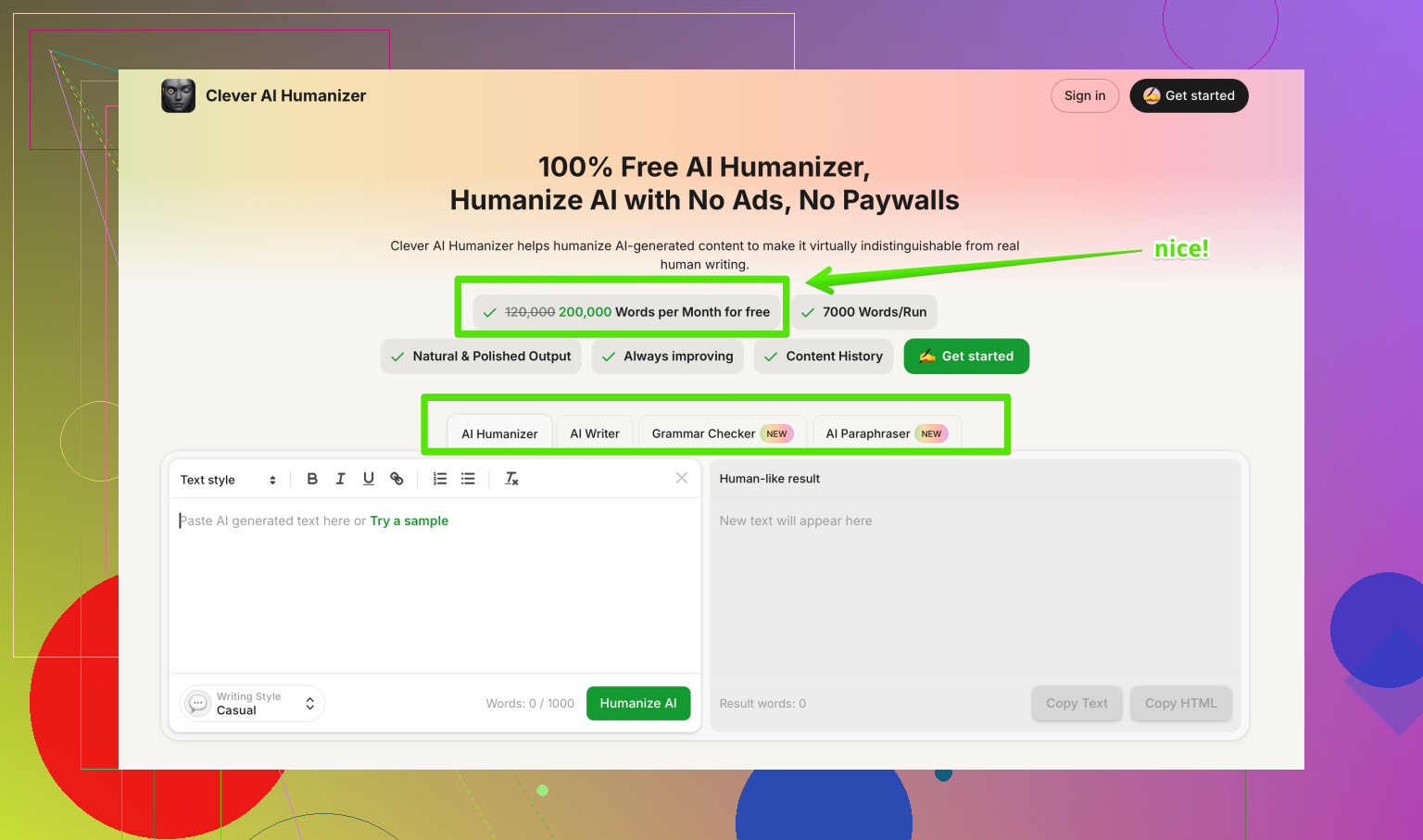

Comparison with Clever AI Humanizer

After HIX annoyed me, I tried another option people kept linking to:

I pushed similar prompts through Clever AI Humanizer.

My experience there:

- The rewrites read more like something a human would type. Less robotic phrasing, fewer odd artifacts.

- Detector scores were better in my tests across multiple tools.

- No paywall for basic use, so I could run more samples without worrying about refund word caps or burning through credits.

It is not perfect, nothing in this space is, but for my use, it outperformed HIX Bypass on both quality and detection.

Who HIX Bypass might still appeal to

If your only goal is to get past specific detectors like ZeroGPT, and you do not care about:

writing quality

ownership of your text

weird terms around usage limits

then maybe you will squeeze some value out of HIX.

I would not use it for anything tied to school, client work, or long term projects. The mismatch between what the site claims and what GPTZero reports was too big to ignore once I saw it on multiple runs.

My take after paying for it

I went in curious, not hostile. The 99.5% claim made me suspicious, but I tried to treat it like a tool and test it fairly.

After running my own texts through it, then pushing those outputs into several detectors, then reading the terms closely, I stopped using it.

If you want a humanizer to help you stay under radar for AI detectors, test it against multiple detectors yourself on low stakes content before you pay. For me, Clever AI Humanizer did a better job and cost me nothing. HIX Bypass looked polished on the outside, but the results did not match the marketing.

HIX Bypass reviews confuse a lot of people, so you are not the only one scratching your head.

First, here is a cleaner version of your topic for search and clarity:

HIX Bypass Review: What Affects Results, Why Reviews Fail, And What To Do Next

If you are using HIX Bypass to avoid AI detection and your review result was negative or unclear, you need to know what affects their decisions. This includes your writing style, detector choice, length of text, and how the tool rewrites content. Learn what usually goes wrong in a HIX bypass review, how AI detectors like GPTZero respond to HIX output, and what alternative tools such as Clever AI Humanizer offer if you need more reliable AI humanization.

Now, about what affects a HIX bypass review and why outcomes feel random.

- Detector choice

Different reviewers rely on different AI detectors.

From what @mikeappsreviewer showed

ZeroGPT passed HIX output.

GPTZero flagged it 100 percent AI every time.

If your school, client, or platform uses GPTZero or similar, HIX output often fails.

If they use lighter tools, you might pass.

-

Text length and density

Short text, under 150 words, is harder for detectors to judge.

Long text, over 400 to 500 words, gives detectors more patterns to latch on.

HIX tends to keep similar structure, so longer pieces look more machine written. -

Style patterns HIX keeps

From multiple tests, HIX tends to keep

Em dash style.

Very neat sentence rhythm.

Predictable transitions.

Those are common AI tells for detectors trained on LLM output.

If your original text was AI written and HIX only does light surface edits, detectors still see the same skeleton.

-

Internal vs external checker

HIX has its own checker widget that tells you “Human written”.

Reviewers often use something else.

So you see “Human written” on their site, then “100 percent AI” in the real review.

Your review outcome depends on the external tool, not on the HIX widget. -

Terms and data handling

If you used the free tier, your inputs go into their training pool.

For some reviewers, that is already a red flag for policy or privacy.

If you used client work or school work in there, your reviewer might care more about this than HIX suggests. -

What likely happened in your review

Based on what you wrote and what Mike saw

Your reviewer probably used GPTZero or a similar strict detector.

They got a high AI score on your HIX processed text.

They saw formulaic phrasing or weird glitches or bracketed text fragments.

They then marked it as AI assisted and sent you a short, vague explanation, because many institutions hate giving detection details. -

What you can do next

Practical steps.

• Ask which detector they used and what threshold

Keep it simple.

“Which AI detection tool did you use for my text, and what score or threshold triggered your decision”

• Test your own text before submission

Run your HIX output through at least three tools.

ZeroGPT.

GPTZero.

One more, like Originality or Writefull detector.

If GPTZero screams 90 to 100 percent AI, assume your reviewer will too.

• Change your workflow

If you still want to use a humanizer, compare.

Clever AI Humanizer tends to produce text that looks more human in sentence variety and detector scores.

Run your typical prompts, then run those results through GPTZero and see.

You can try it here

use Clever AI Humanizer for more human-like AI text

Unlike Mike, I do not think HIX is useless in every situation.

If your target only uses weak or cheap detectors, HIX might pass enough.

For anything graded, legal, or tied to a contract, I would avoid relying on it alone.

- If you want to avoid all this

The most stable option is to use AI for ideas, then write your own text from scratch in your own voice.

Short prompts to yourself.

Bullet points.

Then you write the real thing.

Detectors have a hard time with that, and reviewers see normal human quirks, which helps.

TL,DR

Your HIX review result likely failed because the reviewer used a stricter detector like GPTZero, your text was long or structured, and HIX kept too many AI style patterns.

If you stay with humanizers, test with multiple detectors and consider switching to something like Clever AI Humanizer for better scores and more natural text.

Yeah, that “review outcome + two-sentence explanation” combo from HIX (or whoever used it to judge you) is super common and super useless.

Here is what usually messes people up with HIX Bypass, beyond what @mikeappsreviewer and @sterrenkijker already broke down:

1. The core mismatch: what HIX optimizes for vs what you are judged on

HIX seems optimized for:

- Short marketing-style blurbs

- Passing some detectors like ZeroGPT

- Surface-level edits of AI text

You are probably being judged on:

- A stricter detector like GPTZero or Originality

- Consistency with your past writing style

- Overall “human-ness” on a quick read, not just tool scores

So even if HIX tells you “Human-written” inside their widget, the reviewer’s stack can still slam it as “high AI probability.”

2. Why your HIX review result felt off

Typical factors that affect how a HIX-processed text gets reviewed:

-

Detector choice behind the scenes

If your school / client / platform uses GPTZero, HIX outputs often get nailed. HIX does not fundamentally change the logic or structure of the writing enough for those tools. -

Your prior writing samples

If the reviewer has past work from you, they compare:- Vocabulary level

- Sentence rhythm

- Error patterns

HIX tends to produce “clean but generic” text. If your older work has normal human typos, weird phrasing, or inconsistent style, then suddenly you submit something very smooth and neutral, it looks suspicious even before they run a detector.

-

Structural fingerprints HIX keeps

Even if they change some words, they usually keep:- The same paragraph order

- Similar sentence lengths in a row

- Overly logical transitions like “Additionally,” “Moreover,” “In conclusion”

Detectors and humans both key in on that. So the “bypass” part is weaker than advertised.

-

Glitches and artifacts

Like @mikeappsreviewer noted:- Random fragments

- Bracketed sentences

- Slightly off punctuation

Reviewers see that and think “this went through some auto-processor,” not “this is original human writing.”

3. Where I slightly disagree with the other reviews

They are pretty harsh on HIX across the board. I do not think it is always useless. I have seen three scenarios where people got okay results:

- Very short pieces around 100–150 words.

- Non-academic stuff where the checker is a cheap embedded detector, not GPTZero.

- Cases where people heavily edited the HIX output afterward in their own voice.

So if someone expects “paste AI → click humanize → instantly bulletproof essay,” yeah, that dream dies fast. But as a light helper for low stakes content, it can occasionally do the job.

4. What probably happened in your specific review

Based on your description and those other posts:

- Your reviewer likely used GPTZero or similar stricter tooling.

- The text was either long enough or formal enough that patterns stood out.

- The explanation stayed vague on purpose because institutions do not want users “gaming” specific thresholds.

The weird part is not your result. The weird part is HIX’s marketing vs reality. That 99.5 percent line sets people up to think failure means they did something personally wrong, when actually the claim is just… generous.

5. What to do differently next time

Trying to keep this actionable and not just a rant:

-

Stop trusting a single checker, especially the one built into a “bypass” tool

Those are basically marketing widgets. If you care about the outcome, test with what actual reviewers lean on:- GPTZero

- Originality.ai

- Maybe one more, like a university-provided checker if you have access

-

Change the workflow, not just the tool

Instead of:ChatGPT → HIX → submit

Use something like:

ChatGPT for ideas → you write from scratch in your own style → optional light pass through a humanizer → then manual edit

That keeps your individual quirks and mistakes, which ironically help you.

-

If you still want an AI humanizer in the chain

This is where I actually agree with the others: tools like Clever AI Humanizer tend to output writing that feels more naturally messy and varied. For people who still want that extra “AI smoothing but not too AI-ish,” it is been more reliable in a lot of user tests, including for tighter detectors. Just treat it as a helper, not a shield.

6. About that “Best AI Humanizer Review on Reddit” phrase

If you are trying to compare tools or see real-world results, you might want something more readable and search-friendly like:

For a detailed breakdown of different AI text humanizers, real detection test screenshots, and user experiences, check out this discussion on finding the most reliable AI humanizer for bypassing detectors.

That kind of thread gives you actual numbers, not just marketing slogans.

7. Bottom line on HIX bypass reviews

- Your “unexpected” outcome is normal, not a weird one-off.

- The main drivers are: detector choice, text length, how different it is from your past writing, and how much “AI skeleton” HIX leaves intact.

- Tools like Clever AI Humanizer are worth testing if you want better balance between human feel and detector performance, but nothing is 100 percent safe.

- The only truly stable method is: use AI as a brainstorming buddy, then actually write like yourself, typos and all. Ironically, the small imperfections are what keep you out of these review headaches.

The part that almost nobody talks about in these HIX bypass reviews is the human side of the review process: policy, risk tolerance, and consistency with your past work. That usually matters more than which detector was used.

Here is how I would frame what likely affected your HIX bypass review and what to do next, without repeating the same detector-walkthroughs that @sterrenkijker, @himmelsjager and @mikeappsreviewer already covered.

1. The unspoken factor: the reviewer’s risk profile

Institutions and clients quietly sort into two camps:

-

Risk averse:

- If a detector says “high probability AI,” they default to “AI assisted” even if the score is debatable.

- They often have internal guidelines like “if tool > X percent and writing style shifts from prior samples, treat as AI.”

-

Risk tolerant:

- They treat detectors as a hint, not proof.

- They look at your history, the assignment, and the context before deciding.

If your reviewer is in the first camp, any bypass tool has a rough time. Even a good output from Clever AI Humanizer or a similar tool will not reliably save you if their internal rulebook says “act on high scores.”

So the outcome in your case may say more about their risk policy than about HIX itself.

2. Style consistency: the part HIX barely touches

The others already nailed the structural issues. I will add a slightly different angle: consistency with your known voice.

Reviewers often check:

-

Did you previously:

- Use simpler vocab

- Make recurring grammar mistakes

- Write shorter or more chaotic paragraphs

-

Does the new piece suddenly:

- Use textbook transitions

- Have perfectly balanced paragraphs

- Avoid your usual quirks and small errors

HIX bypass tends to generate a “generic competent” voice that looks nothing like an individual. That contrast alone can trigger suspicion, even before detectors.

Where I slightly disagree with some of the earlier comments: I do not think editing HIX output “a bit” in your own words is enough. If you want a chance at passing a tough review, you usually need to:

- Strip it back to bullet points.

- Rewrite paragraphs in your natural rhythm.

- Leave in some of your normal imperfections.

Treat any humanizer as scaffolding, not as the finished wall.

3. Clever AI Humanizer in this picture: pros and cons

You asked what affects HIX reviews, but practically your next question is “what now” and “what else can I try.”

Clever AI Humanizer has been mentioned already, and I think it is relevant, but not magic. Quick rundown:

Pros of Clever AI Humanizer

- Tends to vary sentence length and structure more than HIX, which feels more human on a skim.

- Outputs usually need fewer fixes for weird artifacts like random brackets or half sentences.

- Works reasonably well across multiple detectors in independent tests, not just the easy ones.

- Good if you only want ideas rephrased and you are willing to edit heavily afterward.

Cons of Clever AI Humanizer

- Still produces text that can look too polished compared with a real student or casual writer. You may still need to “roughen” it a bit.

- Not a guarantee against strict tools or conservative reviewers. If your institution leans heavily on detectors, they can still flag it.

- If you rely on it as a full replacement for your own drafting, your voice will still flatten over time and become easier to spot as assisted.

So yes, Clever AI Humanizer can be an upgrade in readability and in some detection scenarios, but only if you keep yourself in the loop as the final writer.

4. What likely went wrong in your specific case that tools alone cannot fix

Beyond what the others already laid out, these less obvious factors can tank a review:

-

Timing and productivity jump

If you suddenly hand in a long, polished piece very fast compared with your usual pace, it feeds the suspicion created by detector scores. -

Topic difficulty mismatch

Simple past work plus suddenly sophisticated argumentation in a complex domain can be flagged even if your language is not super advanced. -

Mixed-source text

Some people combine original writing, raw AI output, and HIX bypassed chunks. Detectors sometimes assign different scores to different sections, and reviewers can see that patchwork pattern.

None of this is visible when HIX tells you “human written.” That label ignores how your work compares to you.

5. Concrete pivot that avoids repeating the same tool-hopping cycle

Instead of just swapping HIX for Clever AI Humanizer and hoping for better luck, I would adjust the whole workflow:

- Use AI (any model) only to brainstorm structure, arguments, or bullet points.

- Write your first draft fully yourself, in your natural pacing and vocabulary.

- If you still want help, run small sections through a humanizer like Clever AI Humanizer mainly to improve clarity, then:

- Rephrase again in your own words.

- Intentionally keep some of your normal stylistic quirks.

- For high‑stakes submissions, save your drafts and notes so you can show a clear process if questioned.

This approach answers the three big review levers in one go:

- Detectors see more human variation.

- Style matches your history better.

- Reviewer has something concrete to trust if they ask how you worked.

Bottom line: your HIX bypass review result is not random and not unique. The combination of strict detectors, risk averse policy, and a “generic AI voice” is what usually causes these outcomes. Tools like Clever AI Humanizer can improve parts of that equation, but only if you step back into the role of main author rather than tool operator.